Rice College’s findings reveal that repetitive artificial knowledge coaching can result in ‘Mannequin Autophagy Dysfunction’, deteriorating the standard of generative AI fashions. Steady reliance on artificial knowledge with out contemporary inputs can doom future AI fashions to inefficiency and diminished variety.

Generative synthetic intelligence (AI) fashions reminiscent of OpenAI’s GPT-4o or Stability AI’s Secure Diffusion excel at creating new textual content, code, photographs, and movies. Nevertheless, coaching these fashions requires huge quantities of knowledge, and builders are already scuffling with provide limitations and will quickly exhaust coaching sources altogether.

As a result of this knowledge shortage, utilizing artificial knowledge to coach future generations of AI fashions could appear to be an alluring choice to large tech for numerous causes. AI-synthesized knowledge is cheaper than real-world knowledge and nearly limitless when it comes to provide, it poses fewer privateness dangers (as within the case of medical knowledge), and in some instances, artificial knowledge could even enhance AI efficiency.

Nevertheless, latest work by the Digital Sign Processing group at Rice College has discovered {that a} food plan of artificial knowledge can have vital unfavourable impacts on generative AI fashions’ future iterations.

The Dangers of Autophagous Coaching

“The issues come up when this artificial knowledge coaching is, inevitably, repeated, forming a type of a suggestions loop ⎯ what we name an autophagous or ‘self-consuming’ loop,” stated Richard Baraniuk, Rice’s C. Sidney Burrus Professor of Electrical and Laptop Engineering. “Our group has labored extensively on such suggestions loops, and the unhealthy information is that even after just a few generations of such coaching, the brand new fashions can develop into irreparably corrupted. This has been termed ‘mannequin collapse’ by some ⎯ most not too long ago by colleagues within the subject within the context of enormous language fashions (LLMs). We, nonetheless, discover the time period ‘Mannequin Autophagy Dysfunction’ (MAD) extra apt, by analogy to mad cow disease.”

Mad cow illness is a deadly neurodegenerative sickness that impacts cows and has a human equal brought on by consuming contaminated meat. A major outbreak within the 1980-’90s introduced consideration to the truth that mad cow illness proliferated on account of the apply of feeding cows the processed leftovers of their slaughtered friends ⎯ therefore the time period “autophagy,” from the Greek auto-, which suggests “self,”’ and phagy ⎯ “to eat.”

“We captured our findings on MADness in a paper offered in Might on the Worldwide Convention on Studying Representations (ICLR),” Baraniuk stated.

The research, titled “Self-Consuming Generative Fashions Go MAD,” is the primary peer-reviewed work on AI autophagy and focuses on generative picture fashions like the favored DALL·E 3, Midjourney, and Secure Diffusion.

Impression of Coaching Loops on AI Fashions

“We selected to work on visible AI fashions to higher spotlight the drawbacks of autophagous coaching, however the identical mad cow corruption points happen with LLMs, as different teams have identified,” Baraniuk stated.

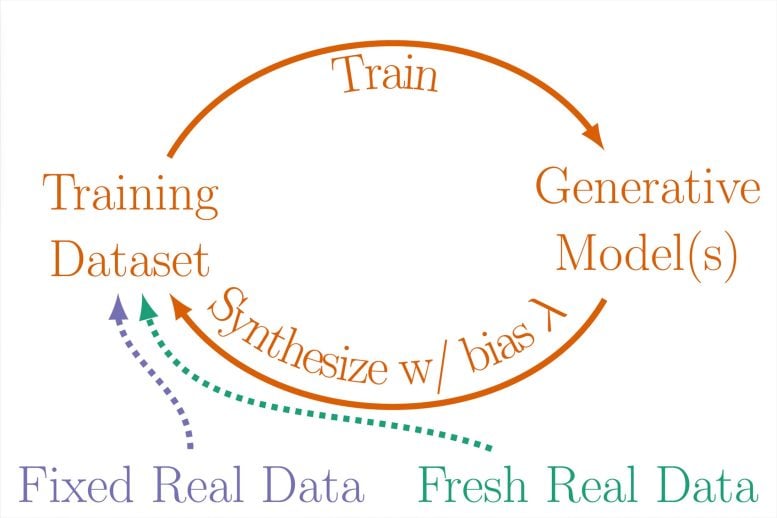

The web is often the supply of generative AI fashions’ coaching datasets, in order artificial knowledge proliferates on-line, self-consuming loops are more likely to emerge with every new technology of a mannequin. To get perception into totally different situations of how this may play out, Baraniuk and his workforce studied three variations of self-consuming coaching loops designed to supply a practical illustration of how actual and artificial knowledge are mixed into coaching datasets for generative fashions:

- absolutely artificial loop ⎯ Successive generations of a generative mannequin had been fed a completely artificial knowledge food plan sampled from prior generations’ output.

- artificial augmentation loop ⎯ The coaching dataset for every technology of the mannequin included a mix of artificial knowledge sampled from prior generations and a hard and fast set of actual coaching knowledge.

- contemporary knowledge loop ⎯ Every technology of the mannequin is educated on a mixture of artificial knowledge from prior generations and a contemporary set of actual coaching knowledge.

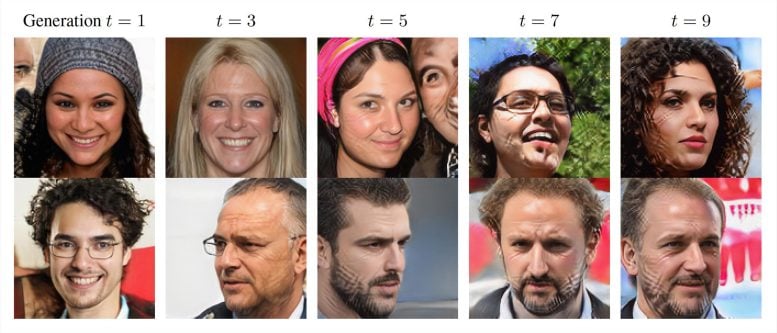

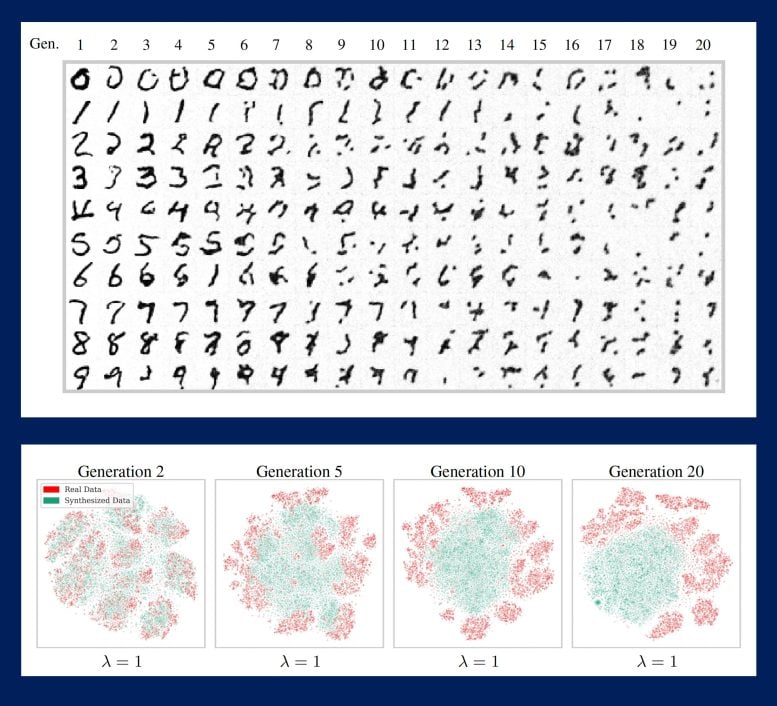

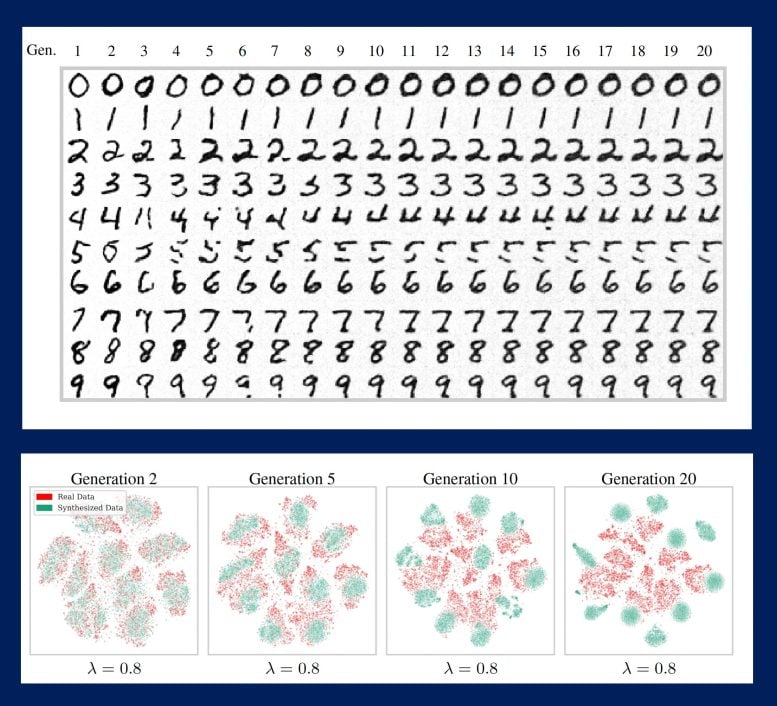

Progressive iterations of the loops revealed that, over time and within the absence of enough contemporary actual knowledge, the fashions would generate more and more warped outputs missing both high quality, variety, or each. In different phrases, the extra contemporary knowledge, the more healthy the AI.

Penalties and Way forward for Generative AI

Facet-by-side comparisons of picture datasets ensuing from successive generations of a mannequin paint an eerie image of potential AI futures. Datasets consisting of human faces develop into more and more streaked with gridlike scars ⎯ what the authors name “generative artifacts” ⎯ or look increasingly like the identical particular person. Datasets consisting of numbers morph into indecipherable scribbles.

“Our theoretical and empirical analyses have enabled us to extrapolate what may occur as generative fashions develop into ubiquitous and prepare future fashions in self-consuming loops,” Baraniuk stated. “Some ramifications are clear: with out sufficient contemporary actual knowledge, future generative fashions are doomed to MADness.”

To make these simulations much more real looking, the researchers launched a sampling bias parameter to account for “cherry choosing” ⎯ the tendency of customers to favor knowledge high quality over variety, i.e. to commerce off selection within the forms of photographs and texts in a dataset for photographs or texts that look or sound good. The inducement for cherry-picking is that knowledge high quality is preserved over a higher variety of mannequin iterations, however this comes on the expense of a fair steeper decline in variety.

“One doomsday state of affairs is that if left uncontrolled for a lot of generations, MAD might poison the information high quality and variety of all the web,” Baraniuk stated. “In need of this, it appears inevitable that as-to-now-unseen unintended penalties will come up from AI autophagy even within the close to time period.”

Reference: “Self-Consuming Generative Models Go MAD” by Sina Alemohammad, Josue Casco-Rodriguez, Lorenzo Luzi, Ahmed Imtiaz Humayun, Hossein Babaei, Daniel LeJeune, Ali Siahkoohi and Richard Baraniuk, 8 Might 2024, Worldwide Convention on Studying Representations (ICLR), 2024.

Along with Baraniuk, research authors embody Rice Ph.D. college students Sina Alemohammad; Josue Casco-Rodriguez; Ahmed Imtiaz Humayun; Hossein Babaei; Rice Ph.D. alumnus Lorenzo Luzi; Rice Ph.D. alumnus and present Stanford postdoctoral pupil Daniel LeJeune; and Simons Postdoctoral Fellow Ali Siahkoohi.

The analysis was supported by the Nationwide Science Basis, the Workplace of Naval Analysis, the Air Power Workplace of Scientific Analysis, and the Division of Power.