Like all the massive AI corporations, Bing’s Picture Creator software program has a content policy that prohibits creation of photos that encourage sexual abuse, suicide, graphic violence, hate speech, bullying, deception, and disinformation. A number of the guidelines are heavy-handed even by the same old “belief and security” requirements (hate speech is outlined as speech that “excludes” people on the premise of any precise or perceived “attribute that’s constantly related to systemic prejudice or marginalization”). Predictably, it will exclude a variety of completely anodyne photos. However the guidelines are the least of it. The extra impactful, and fascinating, query is how these guidelines are literally utilized.

I now have a pinhole view of AI security guidelines in motion, and it positive appears to be like as if Bing is taking very broad guidelines and coaching their engine to use them much more broadly than anybody would anticipate.

This is my expertise. I’ve been utilizing Bing Picture Creator these days to create Cybertoonz (examples here, here, and here), regardless of my profound lack of creative expertise. It had the same old technical issues—too many fingers, bizarre faces—and a few issues I suspected have been designed to keep away from “gotcha” claims of bias. For instance, if I requested for an image of members of the European Courtroom of Justice, the engine nearly all the time created photos of extra girls and identifiable minorities than the CJEU is prone to have within the subsequent fifty years. But when the AI engine’s political correctness detracted from the message of the cartoon, it was straightforward sufficient to immediate for male judges, and Bing did not deal with this as “excluding” photos by gender, as one might need feared.

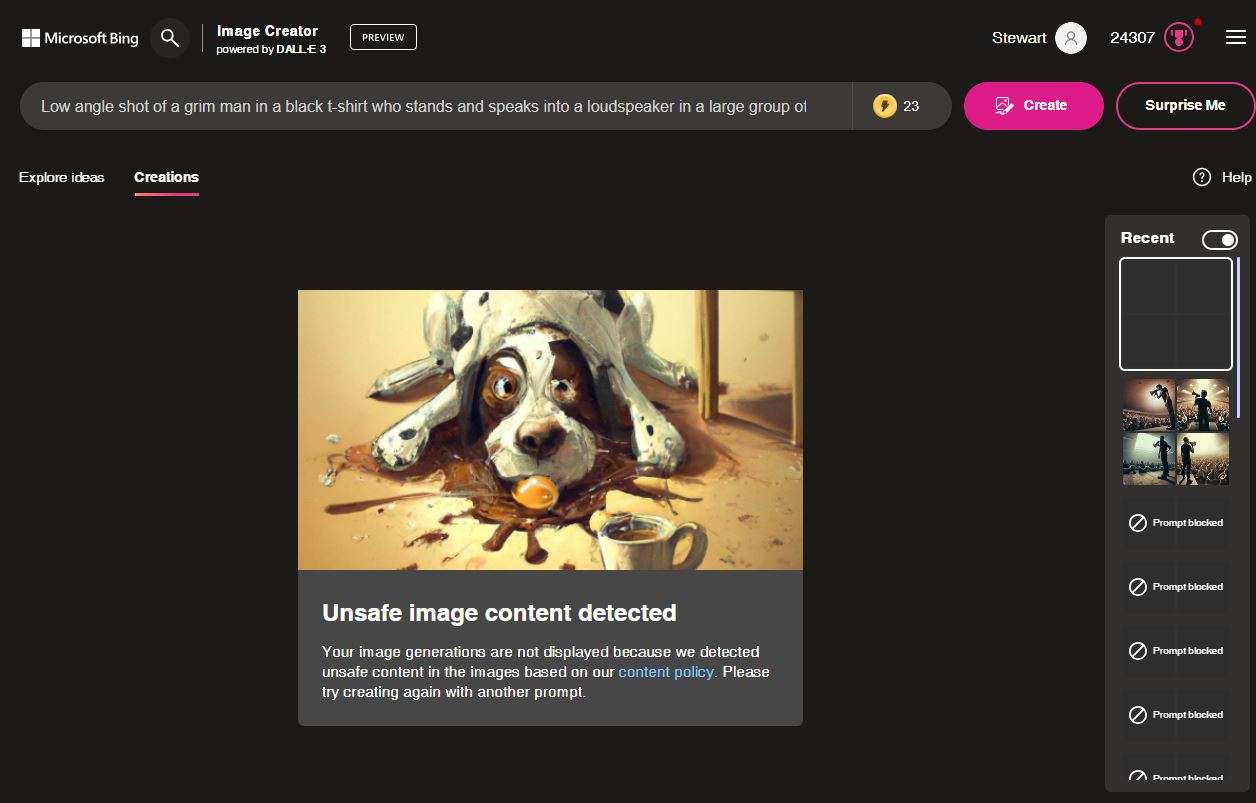

My more moderen expertise is a bit more disturbing. I created this Cybertoonz cartoon as an example Silicon Valley’s counterintuitive declare that social media is engaged in protected speech when it suppresses the speech of lots of its customers. My picture immediate was some variant of “Low angle shot of a male authority determine in a black t-shirt who stands and speaks right into a loudspeaker in a big group of seated individuals carrying gags or tape over their mouths. Digital artwork lo-fi”.

As all the time, Bing’s first try was surprisingly good, however flawed, and getting a useable model required dozens of edits of the immediate. Not one of the photos have been fairly proper. I lastly settled for the one which labored greatest, turned it right into a Cybertoonz cartoon, and printed it. However I hadn’t given up on discovering one thing higher, so I went again the subsequent day and ran the immediate once more.

This time, Bing balked. It instructed me my immediate violated Bing’s security requirements:

After some experimenting, it turned clear that what Bing objected to was depicting an viewers “carrying gags or tape over their mouths.”

How does this violate Bing’s security guidelines? Are gags an incitement to violence? A marker for “[n]on-consensual intimate exercise”? In context, these interpretations of the principles are ridiculous. However Bing is not deciphering the principles in context. It is attempting to jot down extra code to ensure there are not any violations of the principles, come hell or excessive water. So if there’s an opportunity that the picture it produces would possibly present non-consensual intercourse or violence, the belief and security code goes to reject it.

That is nearly definitely the way forward for AI belief and security limits. It is going to begin with overbroad guidelines written to fulfill left-leaning critics of Silicon Valley. Then these overbroad guidelines will likely be additional broadened by hidden code written to dam many completely compliant prompts simply to make sure that it blocks a handful of noncompliant prompts.

Within the Cybertoonz context, such limits on AI output are merely an annoyance. However AI is not all the time going to be a toy. It is going for use in drugs, hiring, and different crucial contexts, and the identical dynamic will likely be at work there. AI corporations will likely be pressured to undertake belief and security requirements and implementing code that aggressively bar outcomes which may offend the left half of American political discourse. In purposes that have an effect on individuals’s lives, nonetheless, the code that ensures these outcomes can have a number of unanticipated penalties, lots of which nobody can defend.

Given the stakes, my query is straightforward. How can we keep away from these penalties, and who’s working to stop them?